Introduction to Natural Language Processing

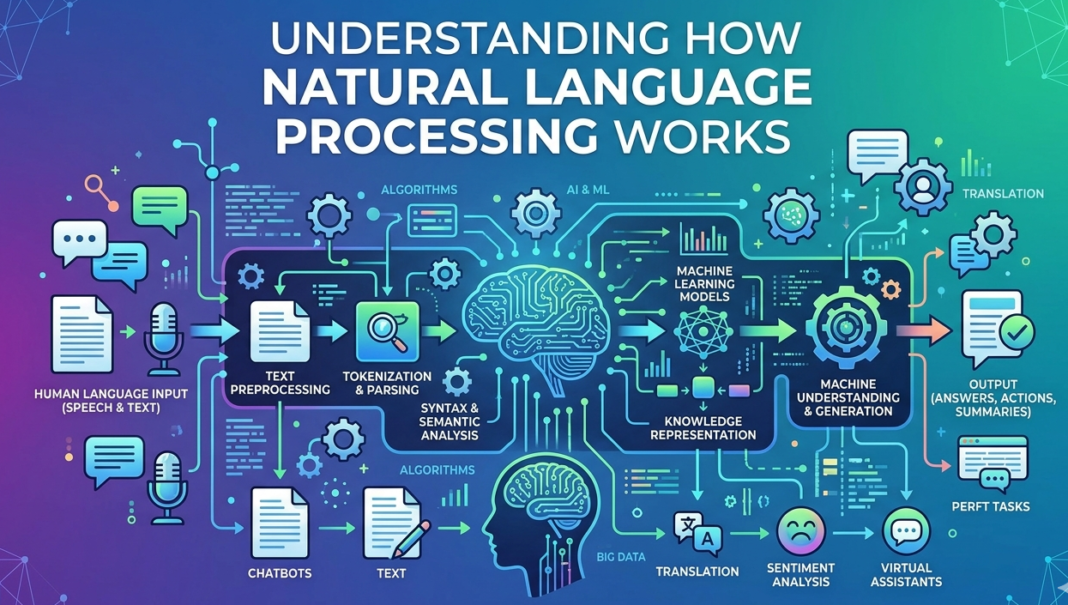

Natural Language Processing (NLP) is a subfield of artificial intelligence that focuses on the interaction between computers and human language. The primary objective of NLP is to enable computers to understand, interpret, and generate human language in a meaningful way. This technology plays a crucial role in bridging the communication gap between humans and machines, making it easier for users to interact with various applications.

The significance of NLP is becoming increasingly evident as the digital age evolves. With the exponential growth of data generated from text and speech, efficient processing of natural language is essential for extracting valuable information. Applications of NLP can be found in a variety of fields, including but not limited to, customer service, healthcare, education, and content creation.

One of the most recognizable applications of NLP is in the development of chatbots. These intelligent systems utilize NLP algorithms to provide users with assistance and information, simulating human-like conversations. Companies leverage chatbots to enhance customer engagement and streamline service provision, highlighting the relevance of NLP in modern business operations.

Another remarkable application lies in machine translation, where NLP techniques are employed to convert text from one language to another. Services such as Google Translate utilize sophisticated algorithms and large datasets to improve translation accuracy, showcasing NLP’s ability to transcend language barriers.

Sentiment analysis is yet another domain where NLP plays a vital role, enabling businesses to interpret and gauge public sentiment regarding products or services. By analyzing social media posts and reviews, organizations can obtain insights that inform marketing strategies and product development.

In conclusion, the growing importance of Natural Language Processing in today’s digital environment cannot be overstated. As technology continues to advance, NLP will likely play an even more significant role in enhancing human-computer interactions and solving complex language-related challenges.

The Basics of Language and Linguistics

Language is a complex system of communication that integrates various elements to convey meaning. It can be dissected into three primary components: syntax, semantics, and pragmatics. Understanding these components is fundamental to the field of natural language processing (NLP), which strives to create algorithms that can understand and interpret human language.

Syntax refers to the structure of language, which includes the rules that dictate how words are arranged to form sentences. It is essential for determining grammatical correctness and the relationships between different parts of speech. In the realm of NLP, syntax is crucial, as it influences how text is parsed and understood by algorithms. Parsing techniques, which analyze the syntactic structure of sentences, are foundational in applications like machine translation and speech recognition.

On the other hand, semantics deals with the meaning of words and phrases, focusing on the interpretation of sentences as a whole. Understanding semantics is critical for NLP because it allows machines to grasp what a sentence implies rather than just how it is structured. For instance, the word “bank” can refer to a financial institution or the side of a river, demonstrating the need for sophisticated algorithms that can derive meaning based on context.

The third component, pragmatics, pertains to the context in which language is used. It encompasses the social and cultural nuances that influence how language is interpreted. For example, a statement that may seem straightforward can have different implications depending on who is speaking or the situation in which it is uttered. Incorporating pragmatic understanding into NLP models enables better handling of idioms, humor, and sarcasm, which are often challenging for machines to decode accurately.

In summary, the interplay of syntax, semantics, and pragmatics forms the backbone of language and linguistics. A thorough understanding of these elements is imperative for the continued development of effective natural language processing algorithms, facilitating more intuitive interactions between humans and machines.

Key Technologies Behind NLP

Natural Language Processing (NLP) integrates various technologies and methodologies that allow machines to understand and interpret human language. At the heart of these advancements are machine learning and deep learning, both of which have revolutionized the way computers process language data.

Machine learning, a subset of artificial intelligence, involves training algorithms using large datasets to recognize patterns and make predictions. In the context of NLP, machine learning algorithms analyze vast amounts of text data, learning the structure of language, identifying parts of speech, and understanding relationships between words. Techniques such as supervised and unsupervised learning are commonly employed to enhance a machine’s ability to process and generate human-like responses.

Deep learning, on the other hand, takes machine learning a step further by employing neural networks with many layers. These deep neural networks can model complex relationships in data, making them particularly effective for tasks such as sentiment analysis, language translation, and speech recognition. Convolutional Neural Networks (CNNs) and Recurrent Neural Networks (RNNs) are notable architectures that help tackle different aspects of language processing, allowing for both context-sensitive and sequential understanding of text.

Moreover, various algorithms play a critical role in NLP tasks. For instance, the Hidden Markov Model (HMM) is extensively used for speech recognition, while the Transformer architecture facilitates the training of large language models. These models have reshaped NLP by enabling tasks such as text generation and summarization through mechanisms like attention, which allow them to focus on relevant parts of the input data effectively.

As we explore further into the realm of NLP, understanding these core technologies will provide insights into how language is analyzed and processed, paving the way for advancements in communication between humans and machines.

Data Collection and Preprocessing

Data collection is a critical initial step in the development of Natural Language Processing (NLP) applications. In this phase, various types of data are gathered to train algorithms that can interpret and analyze human language. One of the primary sources of data is text corpora, which may include books, articles, websites, social media posts, and more. These sources are rich in linguistic content and provide diverse examples of language usage. Additionally, real-time data, such as tweets, news feeds, and online conversations, can be captured to reflect current language trends and contemporaneous usage patterns.

After gathering the necessary data, preprocessing is essential to ensure that it is clean and structured before it can be utilized in NLP tasks. This involves several techniques aimed at improving the quality of the input data. Tokenization is one key preprocessing step, where text is broken down into smaller units, typically words or phrases, known as tokens. This enables the NLP model to better analyze and understand the linguistic components of the text.

Another important technique is stemming, which reduces words to their base or root form. For instance, words like “running”, “runner”, or “ran” may all be stemmed to the root “run.” This process helps in normalizing the data by eliminating variations of words that have the same meaning. In contrast, lemmatization enhances this process by considering the context of the words, returning the correct base form. For example, the word “better” would be lemmatized to “good.” Together, tokenization, stemming, and lemmatization play a significant role in the data preprocessing phase and are crucial for the success of any NLP application.

NLP Techniques and Models

Natural Language Processing (NLP) employs a variety of techniques and models to interpret and generate human language. The three principal categories include Rule-Based Models, Statistical Models, and Neural Networks, each serving distinct roles and having unique strengths and weaknesses.

Rule-Based Models are among the oldest techniques in NLP. They rely on a set of handcrafted linguistic rules to analyze and process text. For instance, these models can employ grammar rules to parse sentences, enabling the extraction of meaningful information. The primary advantage of Rule-Based Models lies in their interpretability; the decision-making process is transparent due to the defined rules. However, they struggle with ambiguity and require extensive manual effort to develop and maintain, making them less scalable for large datasets.

In contrast, Statistical Models leverage data-driven approaches to analyze language. By utilizing algorithms that learn from word patterns and occurrences, these models can handle large corpora of text with greater efficiency. Techniques such as Hidden Markov Models (HMMs) or n-grams exemplify statistical approaches. Their main advantage is the speed and adaptability to continuously evolving language use. Nevertheless, the reliance on probability means that statistical models may perform poorly in scenarios where there is insufficient training data or where language expresses nuanced meanings.

Neural Networks have gained significant traction in recent years, primarily due to advancements in computing power and the availability of large datasets. These models, particularly deep learning architectures, enable high-dimensional data representation and can capture complex patterns in language. While they can deliver superior performance in tasks such as translation and sentiment analysis, their disadvantages include a lack of interpretability and an extensive need for labeled training data. Ultimately, the choice of model within NLP should depend on the specific application requirements, available resources, and the desired balance between accuracy and interpretability.

Applications of NLP in Everyday Life

Natural Language Processing (NLP) has become an integral part of our daily routines, enhancing how we interact with technology. One of the most prominent applications of NLP is in virtual assistants such as Siri and Alexa. These assistants utilize NLP to understand and process natural language commands. Users can ask questions or give commands in everyday language, and the Assistant interprets these requests to provide relevant responses or actions. For instance, asking, “What’s the weather like today?” prompts the assistant to access weather data and deliver the information succinctly.

Another significant application of NLP is found in translation services, notably Google Translate. This tool leverages NLP algorithms to translate text from one language to another, making cross-cultural communication more seamless. Users can input phrases in their native language, and the system utilizes NLP techniques to analyze the structure and semantics of the input, thereby generating a coherent translation in the desired language. This real-time translation capability not only aids travelers but also fosters international business relations.

Furthermore, NLP plays a crucial role in sentiment analysis, particularly in the marketing sector. Businesses now employ NLP tools to gauge consumer sentiment through social media posts and reviews. By analyzing text data, these tools can determine whether the overall sentiment surrounding a product or service is positive, negative, or neutral. For example, a company might use sentiment analysis to adjust its marketing strategies based on customer feedback gathered from social platforms, leading to improved engagement and customer satisfaction.

Overall, the applications of NLP in everyday life are diverse and increasing. Its ability to facilitate communication, enhance user interactions, and analyze data is transforming the way individuals and businesses engage with technology.

Challenges in Natural Language Processing

Natural Language Processing (NLP) encompasses a wide range of challenges that researchers and developers must navigate to enhance the accuracy and efficacy of language understanding systems. One significant hurdle in this domain is the inherent ambiguity often found in natural language. Words can have multiple meanings depending on context, leading to potential misunderstandings. For instance, the term “bank” could refer to a financial institution or the side of a river, which underscores the necessity for NLP systems to assess context accurately to infer the intended meaning.

Moreover, cultural and contextual differences pose additional challenges in the deployment of NLP technologies. Language nuances can vary significantly between regions and communities, affecting how certain phrases or dialects are interpreted. For example, idiomatic expressions in one language may not have direct translations in another, complicating the language processing task. Thus, it becomes crucial for NLP models to consider these variances to avoid erroneous outputs and provide more relatable and inclusive interpretations, enhancing overall user experience.

Ethical issues related to bias within language models also represent a critical challenge in NLP. The data used for training these models can sometimes reflect societal biases, which may lead to biased outcomes in language understanding and generation. Research on reducing bias through improved dataset curation and training methods is ongoing, as developers strive to create more equitable systems. Addressing these ethical challenges not only enhances the reliability of NLP applications but also builds trust among users. Continuous efforts are being made in the NLP field to confront these hurdles through innovation in algorithms and training practices, with the goal of developing more sophisticated and bias-free language processing systems.

The Future of NLP

As we look forward to advancements in Natural Language Processing (NLP), it is evident that the interplay between artificial intelligence (AI), machine learning, and human-computer interaction will play a pivotal role in shaping its trajectory. Emerging trends in NLP hint at considerable developments, particularly in the refinement of conversational AI. With the proliferation of intelligent virtual assistants, the ability for machines to understand and generate human language is becoming increasingly sophisticated. This progression not only enhances user experience but also paves the way for more natural interactions between humans and machines.

The rise of deep learning techniques has significantly contributed to the advancement of NLP capabilities. By leveraging large datasets and powerful computational models, these techniques allow for improved language understanding, translation accuracy, and content generation. Furthermore, innovations in transfer learning—where knowledge gained while solving one problem can be applied to another—are expected to optimize NLP applications across various domains, minimizing the need for extensive training on specific tasks.

The future of NLP also holds promise for various industries, from healthcare to finance. For instance, in healthcare, NLP technologies can facilitate better patient communication, enhance clinical documentation, and streamline the management of electronic health records. In the finance sector, NLP applications are likely to revolutionize customer service interactions, automate document analysis, and enable sentiment analysis on financial news. As these industries continue to adopt NLP solutions, their operational efficiencies and customer engagements will likely transform dramatically.

In conclusion, the future of Natural Language Processing appears poised for exciting advancements driven by AI and machine learning. The potential of conversational AI is set to reshape the dynamics of human-computer interaction, making it more intuitive and effective. As NLP technologies evolve, their impact on various sectors will continue to expand, leading to innovations that can profoundly influence the way we communicate and engage with technology.

Conclusion and Summary

Natural Language Processing (NLP) is a vital field in artificial intelligence that bridges the gap between human communication and computer understanding. Throughout the blog post, we have explored the fundamental principles of NLP, including its key components such as syntactic analysis, semantic understanding, and contextual interpretation. These elements work together to enable machines to process, analyze, and generate human language in a way that is meaningful.

The significance of NLP in modern technology cannot be overstated. As we navigate an increasingly digital world, NLP applications such as voice assistants, chatbots, and language translation tools are becoming essential. They enhance user experiences, streamline processes, and facilitate communication across diverse languages and cultures. Businesses are leveraging NLP to analyze customer feedback, optimize marketing strategies, and improve customer service. The implications of NLP extend far beyond efficiency; they encompass accessibility and inclusivity in technology.

Furthermore, advancements in NLP technology continue at a rapid pace. Machine learning, particularly deep learning, has revolutionized how language models understand and generate text. Innovations like transformer models and pre-trained language models are enhancing the capabilities of NLP and introducing novel applications. It is crucial for individuals and organizations to stay informed about these developments and their potential impacts.

In conclusion, the importance of NLP in the realm of technology and human interaction is profound. As NLP technologies evolve, they hold the potential to redefine communication. By understanding its workings and the current trends in the field, one can appreciate the profound influence of NLP in fostering better communication between humans and machines. Staying updated on NLP advancements is vital for grasping their implications in our daily lives.