Introduction to AI Model Training Costs

Artificial Intelligence (AI) has transformed various industries by enabling machines to learn from data and improve their performance over time. One of the critical phases in developing an effective AI model is training, which involves adjusting the model’s parameters based on vast amounts of data. However, this process is not without considerable financial implications, leading to the concept of AI model training costs.

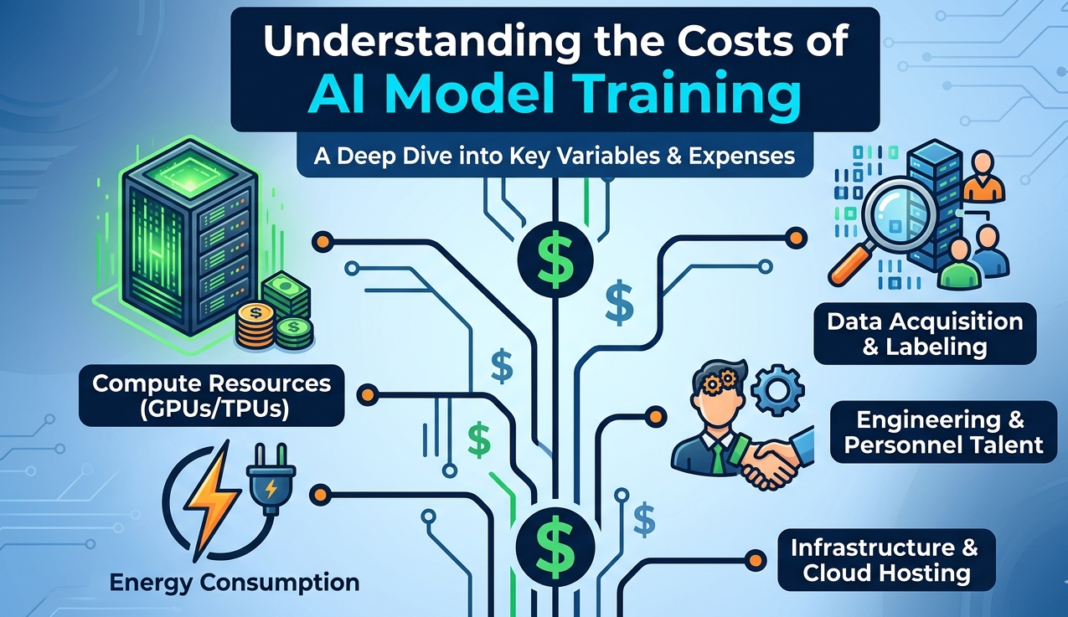

AI model training costs encompass a range of expenses, including data acquisition, computational resources, and human expertise. Collecting and preparing high-quality datasets is often one of the most significant components, as the data forms the foundation upon which models learn. Moreover, the computational resources required for processing these datasets—especially for deep learning models—can be substantial, necessitating the use of powerful graphics processing units (GPUs) or cloud-based solutions. Each of these resources incurs its own costs, contributing to the overall financial investment.

Understanding AI model training costs is crucial for businesses and developers for several reasons. Firstly, having a clear grasp of these costs enables organizations to budget effectively and allocate resources appropriately, ensuring that they do not overspend on AI initiatives. Additionally, recognizing the cost factors can assist organizations in making informed decisions regarding the feasibility of their AI projects. In an era where precision and efficiency can lead to competitive advantages, contextualizing expenses related to AI model training is essential.

To sum up, comprehending the costs associated with AI model training is vital for businesses and developers looking to invest in AI technologies. By doing so, they can enhance their strategic planning and achieve more effective implementation of AI solutions in their operations.

Factors Influencing AI Model Training Costs

Training an AI model incurs various costs driven by numerous factors. One of the primary considerations is data acquisition. High-quality, diverse datasets are crucial for training effective AI models. Depending on the nature and specificity of the data required, costs can escalate significantly. For instance, proprietary data may require substantial licensing fees, while publicly available datasets might demand extensive cleaning and preprocessing efforts, further contributing to costs.

Another critical aspect is the computational resources necessary for model training. The hardware and software used can affect both the expense and efficiency of the process. Powerful GPUs or TPUs are often essential for complex model training, and their prices can vary widely depending on the specifications and the duration they are utilized. Furthermore, cloud computing platforms offer scalable resources but can become costly if workloads are not efficiently managed, leading to unexpected spikes in expenses.

The complexity of the model itself also plays a vital role in determining training costs. Models that employ advanced architectures, such as deep learning in natural language processing or computer vision, require more extensive computational power and time compared to simpler algorithms. As the model complexity increases, so do the associated costs related to both computation duration and the expertise needed to design and implement the models effectively. Consequently, striking a balance between the desired model performance and resources available becomes essential for managing costs effectively.

Overall, understanding these factors allows organizations to better anticipate and manage the expenses linked to AI model training. By weighing the quality of data, the requisite computational capabilities, and model complexity, businesses can make more informed decisions about their AI initiatives.

Data Acquisition and Preprocessing Costs

One of the primary factors contributing to the overall expense of training an AI model is the data acquisition and preprocessing stage. This process is crucial as the quality of data directly influences the performance of the AI model. In essence, the costs involved in acquiring data can vary significantly based on several key factors, including the source and the volume of data required.

Data acquisition costs can include expenses related to purchasing datasets, outsourcing data collection, or even allocating in-house resources for gathering information. Depending on the domain, proprietary data sources can be particularly costly, while public datasets may offer more budget-friendly options but may require additional time to filter for quality. Additionally, the sheer volume of data plays a pivotal role; larger datasets generally incur higher costs not only in terms of acquisition but also in storage and management.

Once the data has been acquired, preprocessing becomes the next significant cost driver. This step involves cleaning the data to eliminate inaccuracies, handling missing values, and organizing it into a usable format. The preprocessing phase can be labor-intensive and often requires specialized expertise, depending on the complexity of the data. For instance, unstructured data like images or text requires more extensive processing before it can be utilized for training than structured data like spreadsheets.

Moreover, the quality of the data is essential; high-quality data is often more expensive, yet it leads to better-performing models, reducing the risk of future costs associated with model re-training or correction. Therefore, organizations must balance the costs of acquiring high-quality data with their training budgets while ensuring the final dataset is suitable for effective AI model training.

In the realm of artificial intelligence, the computational resources and infrastructure utilized for model training play a pivotal role in determining overall costs. Businesses and researchers alike must make strategic decisions regarding the deployment of computational resources to optimize both performance and expenses. One of the primary choices involves selecting between cloud computing and on-premises infrastructure.

Cloud Computing

Cloud computing has gained substantial traction due to its scalability and flexibility. Major providers, such as Amazon Web Services (AWS), Google Cloud Platform (GCP), and Microsoft Azure, offer on-demand computing power, which permits users to pay only for the resources they utilize. This model can significantly reduce upfront infrastructure costs, especially for startups and companies operating with limited budgets. However, it is essential to consider the long-term implications of cloud costs based on storage, processing time, and data transfer fees, which can accumulate during extensive training sessions.

On-Premises Infrastructure

On the other hand, on-premises infrastructure presents a different set of advantages and challenges. Investing in dedicated hardware allows organizations to have complete control over their AI training environment, which may lead to enhanced performance for specific workloads. However, the initial capital investment can be substantial, as purchasing high-end GPUs, CPUs, and networking equipment incurs significant costs. Furthermore, organizations must account for maintenance expenses, electricity consumption, and the staffing needed to manage the hardware. This option can prove advantageous for organizations requiring high security or custom configurations ideal for their unique workflows.

Hardware Choices and Costs

Regardless of the chosen model, hardware selection greatly influences the overall expenses. High-performance components, such as GPUs designed for deep learning, can be costly yet are necessary for efficient model training. Additionally, the balance between required computational power and budget constraints necessitates careful planning to ensure that the selected configuration meets the demands of specific AI applications without incurring excessive expenditure.

Training Duration and Its Cost Implications

In the realm of artificial intelligence (AI) model training, the duration of training sessions is a critical factor that significantly influences overall costs. The relationship between training time and expenses is multifaceted, where longer training durations lead to increased resource utilization and, consequently, higher financial outlays.

One of the primary considerations is the computational power required for running extensive training sessions. AI models, particularly those that harness deep learning techniques, necessitate substantial processing capabilities, which often translates to the use of powerful GPUs or cloud-based computing solutions. As training durations extend, the demand for such computational resources intensifies, consequently driving up costs.

Moreover, longer training sessions also lead to increased consumption of other resources, such as energy and storage. When AI models are trained for extended periods, the electricity used to power the hardware can accumulate significantly, particularly for facilities that operate large data centers. It’s important to recognize that these costs are not merely one-time expenses but represent ongoing operational expenditures that contribute to the overall budget for AI development.

In addition to direct costs associated with computational resources, there are indirect costs to consider. For instance, longer training times can delay project timelines, impacting the competitive advantage of deploying AI solutions promptly. This aspect is important for organizations aiming to leverage AI for business innovation or operational efficiency.

In summary, the training duration of AI models plays a crucial role in determining the total costs associated with their development. Organizations must carefully evaluate and balance the benefits of extended training time against the foreseeable financial implications. By optimizing training duration and computational resource allocation, it is possible to manage costs more effectively while achieving desirable levels of model performance.

Human Resource Costs in AI Development

The human resource costs associated with AI model training represent a significant portion of the overall investment in artificial intelligence projects. To successfully train and implement AI models, organizations must focus on hiring skilled personnel, which includes data scientists, machine learning engineers, and other specialized roles. These professionals bring a diverse set of skills and knowledge essential for developing AI systems that deliver intended outcomes.

Data scientists are at the forefront of AI development, as they are responsible for extracting insights from data and formulating algorithms that help train AI models. Their salaries can vary greatly based on experience, expertise, and geographic location. Moreover, machine learning engineers play a crucial role in the actual deployment and optimization of these models, ensuring that they run efficiently and produce the desired results. As the demand for skilled AI professionals increases, organizations are often faced with competitive compensation packages to attract top talent, further contributing to human resource costs.

In addition to salaries, organizations must consider the costs related to continuous training and development. As AI technologies evolve rapidly, it becomes crucial for these specialists to stay updated with the latest advancements and methodologies. This requirement often necessitates additional investment in training sessions, workshops, and conferences, which overall adds to the human resource expenditure.

Ultimately, cutting corners in hiring or neglecting ongoing training can lead to poor development outcomes, rendering the investment in human resources all the more critical. Skilled personnel are indispensable for ensuring that AI projects are executed effectively and yield successful results, highlighting the importance of addressing human resource costs in AI development comprehensively.

Cost-Benefit Analysis of AI Models

Conducting a cost-benefit analysis for AI model development is a critical step that enables organizations to assess the viability of their projects. This process involves evaluating both the expenses incurred during the training of artificial intelligence models and the anticipated benefits that result from their implementation. Training an AI model consists of various expenses including data acquisition, computational resources, and the expertise needed to develop and maintain the system.

To begin with, it is essential to establish a clear understanding of the costs involved. This includes direct costs such as hardware and software investments, coupled with indirect costs like the time commitment of skilled personnel. The level of complexity involved in the model, alongside the quality and volume of data utilized for training, significantly impacts expenses. Understanding these elements allows businesses to create more accurate budgets and avoid unexpected financial burdens.

On the other side of the equation, organizations must evaluate the potential returns on investment (ROI) from deploying the AI model. This entails forecasting the benefits that the AI model could bring, such as increased efficiency, improved decision-making, and enhanced customer experience. Organizations may quantify these benefits in terms of cost savings, revenue growth, or market competitiveness. By comparing these projected gains with the identified costs, firms can gauge the overall profitability of the AI model.

Additionally, it is prudent for businesses to consider the timeframe for achieving ROI and the risks associated with AI model deployment. The longer it takes for a model to generate returns, the greater the exposure to changing market conditions. Therefore, examining both the short-term and long-term perspectives is vital in making an informed decision about proceeding with AI development.

Future Trends in AI Training Costs

The landscape of artificial intelligence (AI) is rapidly evolving, with significant implications for the costs associated with training AI models. One of the most prominent trends expected to emerge is the advancement of hardware technologies. As computing power continues to increase, it is likely that the costs of processing units such as Graphics Processing Units (GPUs) and specialized AI chips will decrease, making training more accessible and cost-effective. With cloud computing also becoming a standard practice, organizations can leverage scalable resources without substantial investments in infrastructure.

Innovative techniques in AI training are also anticipated to influence future costs. For instance, methods such as transfer learning and federated learning can substantially reduce the amount of data and time required for training models. By utilizing pre-trained models, organizations can minimize computational demands, leading to lower overall expenses. Additionally, optimization algorithms that enhance model efficiency can result in faster training times and reduced energy consumption, further driving down costs.

The market demand for AI solutions is another critical factor that may shape training costs. As more sectors adopt AI technologies, a competitive environment is likely to develop, pushing organizations towards cost-effective training methods. This heightened demand may incentivize the development of open-source tools and frameworks, fostering collaboration and lowering barriers to entry for businesses looking to implement AI solutions. Furthermore, as industries mature in their use of AI, a more standardized approach to model training is expected to emerge, providing clearer benchmarks for cost estimation and management.

In conclusion, the combination of technological advances, innovative training techniques, and evolving market dynamics is poised to influence the costs of AI model training significantly. By staying attuned to these trends, organizations can strategically navigate the complexities of AI expenses and make informed decisions for their future investments in this transformative technology.

Conclusion: Navigating AI Training Costs Effectively

Understanding the costs associated with AI model training is vital for any organization looking to leverage this powerful technology. As discussed throughout this blog post, several factors influence these costs, including hardware, data acquisition, and specialized talent. Businesses must approach these factors with a clear strategy to effectively manage and optimize their investments in AI.

One significant aspect to consider is the choice of infrastructure. Cloud-based services, for instance, can offer scalability and flexibility, allowing companies to pay for only what they use. Conversely, on-premise solutions may entail high upfront costs but can provide long-term savings for organizations with substantial ongoing needs. Understanding your specific requirements and how they align with available resources is crucial in making the right decision.

Data management is another critical factor; organizations must prioritize data quality over quantity. Although acquiring vast amounts of data can be expensive, investing in high-quality, relevant datasets can drastically improve model performance and reduce the overall training costs. Furthermore, leveraging methodologies like transfer learning and batch training can help mitigate costs and enhance efficiency.

Finally, fostering collaboration among multidisciplinary teams—including data engineers, machine learning researchers, and business analysts—allows for a more comprehensive understanding of potential risks and cost drivers. Engaging in continuous education and staying updated on the latest AI developments can also equip teams with the knowledge needed to make informed decisions.

In summary, navigating AI training costs effectively involves acknowledging these multifaceted factors and making strategic choices that align with business goals. By doing so, organizations can optimally utilize their resources, ultimately leading to successful AI implementations and a sustainable competitive advantage.